Why We Open-Sourced Our Infrastructure Agent Architecture

The industry is adopting AI for infrastructure at wildly different speeds. The architectural guidance hasn't kept up with any of them.

We talk to infrastructure teams every week. The spectrum of where they are with AI is striking.

Some are still in copilot mode — autocomplete for Terraform, AI-assisted PR descriptions, maybe a chatbot that answers questions about their cloud setup. Useful, but passive. The AI suggests, a human does everything else.

A larger group has moved into agentic territory. They've adopted Claude Code, Codex, Cursor, or similar tools and are letting AI agents write IaC modules, fix compliance findings, generate entire pull requests. The agent isn't just suggesting — it's doing. The human is still in the driving seat, typically reviewing every step, but the agent is the one writing the code, running the commands, and opening the PRs. This is where most teams we talk to are right now, and it's where the hard questions start.

Then there's a smaller cohort already exploring the boundaries. They're adding custom tools, skills, and MCP servers to their agentic setups. They're finding the workflows that actually work for their team — and starting to think seriously about isolation, sandboxing, and blast radius for agentic work that touches real infrastructure.

Here's what all three groups have in common: they're all navigating the same architectural decisions, just at different depths. And there's almost no shared reference for how to make those decisions well.

That's why we open-sourced the Infrastructure Agents Guide — 13 chapters covering architecture, sandboxing, credentials, change control, observability, policy guardrails, and more. Built from patterns we've learned enabling safe AI adoption for infrastructure teams at Cloudgeni across AWS, Azure, GCP, and OCI.

It's for all of you — whether you're figuring out how to safely give Claude Code access to your cloud account, or adding custom tools and MCP integrations to an agentic setup that's already running.

The gap is architectural, not technical

There's plenty of content on how to prompt an LLM, set up tool calling, or wire a ReAct loop. What's missing is guidance for the layers that sit around the AI — the layers that determine whether your setup is a useful teammate or a liability with cloud credentials.

This is true at every level of adoption.

If you're in copilot mode and thinking about giving an agent write access for the first time, you need to answer: how do I scope credentials so it can't touch what it shouldn't? How do I ensure every change it makes goes through the same review process as a human change?

If you've already adopted agentic tools, you're hitting the next wave of questions: how do I observe what the agent actually did across a multi-step task? How do I enforce policy guardrails that go beyond hoping the system prompt holds? What's my blast radius if the agent gets something wrong?

If you're exploring the boundaries, the questions get more interesting: how do I add custom tools and MCP servers without opening up new attack surface? How do I isolate agentic workloads so a bad tool call can't cascade? What does a proper sandbox look like when the agent is running Terraform, not just writing it?

The guide covers all of these. Not as a linear tutorial, but as an architectural reference you can enter at whatever depth matches where you are.

Why open, why now

Three things pushed us to release this.

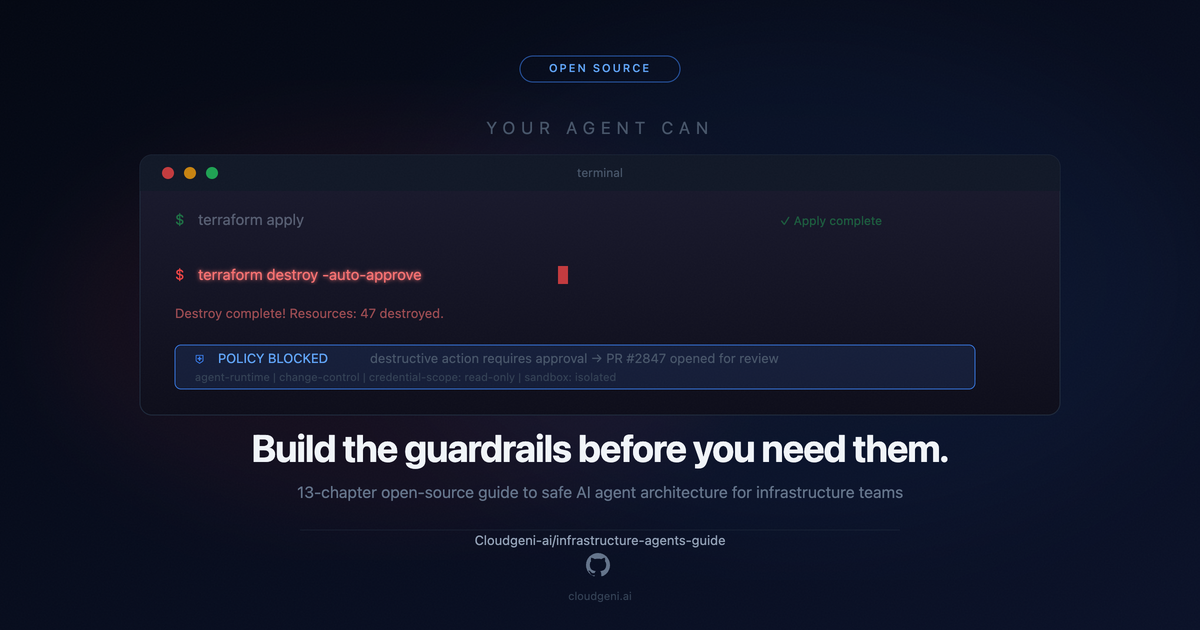

The stakes are real and rising. Infrastructure agents aren't writing blog posts — they're modifying production systems. An agent that can terraform apply can also terraform destroy. An agent that reads configs can leak secrets. An agent that auto-remediates can auto-escalate an outage. As more teams move up the adoption curve, the cost of getting the architecture wrong scales with them.

The same anti-patterns keep showing up. Long-lived credentials passed as environment variables. Agents deploying directly without PR-based change control. No observability beyond "check the LLM logs." Zero policy enforcement beyond the system prompt. These aren't edge cases — they're the default path teams end up on when there's no reference architecture to follow.

Agent architecture shouldn't be a moat. The value of what we do at Cloudgeni is in providing the platform that makes AI adoption safe and operational at scale — not in keeping design patterns secret. If the whole ecosystem builds safer infrastructure agents, everyone wins. Including us.

A taste of what's inside

The guide is vendor-neutral with real patterns, code snippets, and multiple alternatives for each layer. Here are a few concepts to give you a sense of the depth.

Principle: agents never deploy directly

This is principle number one, and it applies whether you're giving Claude Code its first cloud credentials or running a fully autonomous pipeline. Every infrastructure change an agent produces flows through a pull request. The agent creates diffs, not deployments.

┌───────────────┐ ┌───────────────┐ ┌───────────────┐

│ Agent writes │────▶│ Opens a PR │────▶│ CI validates │

│ IaC changes │ │ for review │ │ (plan, scan) │

└───────────────┘ └───────────────┘ └──────┬────────┘

│

┌───────▼────────┐

│ Human approves │

│ or auto-merge │

└───────┬────────┘

│

┌───────▼───────┐

│ CD deploys │

└───────────────┘

The agent is not special. It earns trust through the same mechanisms humans do: code review, CI checks, and approval gates. For teams early in adoption, this means mandatory human review on every change. For teams further along, it means defining which changes are low-risk enough for lighter review and which still need a human in the loop. The pipeline is the same — what changes is how much trust the agent has earned.

The six-plane mental model

Regardless of which tools you've adopted, every infrastructure agent system decomposes into six architectural planes:

┌───────────────────────────────────────────────────┐

│ YOUR INFRASTRUCTURE │

│ AWS / Azure / GCP / OCI Terraform / Pulumi │

└──────────────────────┬────────────────────────────┘

│

┌──────────▼──────────┐

│ POLICY PLANE │ ← What agents CAN do

│ (rules, approvals) │

└──────────┬──────────┘

│

┌────────────▼────────────┐

│ AGENT RUNTIME │

│ Skills · Tools · Creds │ ← How agents DO it

│ Sandboxing · Sessions │

└────────────┬────────────┘

│

┌──────────▼──────────┐

│ CHANGE CONTROL │ ← How changes LAND

│ (PRs, validation) │

└──────────┬──────────┘

│

┌──────────▼──────────┐

│ OBSERVABILITY │ ← How you SEE it

│ (traces, alerts) │

└─────────────────────┘

This gives teams a shared vocabulary. When someone says "we need better guardrails," you can point to the Policy Plane and have a specific conversation about tool restrictions, approval gates, and autonomy tiers — instead of a vague discussion about "making the prompts safer."

If you're just adopting your first agentic tool, this model tells you which planes you need to think about immediately (credential management, change control) versus which you can grow into (policy guardrails, advanced observability). If you're already running agents and adding tools and integrations, it helps you identify the gaps in what you've built.

Not a framework — a reference

We deliberately didn't prescribe a single stack. For each architectural layer, the guide covers multiple alternatives:

| Layer | Options covered |

|---|---|

| LLM Runtime | Claude Code SDK, OpenAI Assistants, LangChain/LangGraph, direct API |

| Task Queue | Redis Streams, BullMQ, AWS SQS, RabbitMQ, Temporal |

| Sandboxing | Docker, Modal, Azure Container Apps, AWS Lambda, Firecracker |

| Change Control | GitHub Actions, GitLab CI, Azure Pipelines, Atlantis, Spacelift |

| Observability | OpenTelemetry + Grafana, Datadog, Dash0, New Relic |

Your infrastructure is unique. Your agent architecture should fit it — not the other way around. And if you're adopting an existing agent tool rather than building from scratch, the guide helps you understand which layers that tool handles and which ones you still need to add yourself.

Who this is for

Platform engineers evaluating how to add agent capabilities to your existing stack — or how to wrap the right safety layers around tools your teams have already adopted. SREs designing guardrails for agentic workflows that touch production. DevOps leads building self-service IaC platforms where agents are first-class participants. Engineering leaders who need a reviewed architecture before expanding what AI can touch.

Whether you're giving an agent its first write permission or wiring up custom tools for a workflow that's uniquely yours, the architectural decisions are the same ones. The guide helps you make them deliberately instead of discovering them in a post-mortem.

Go build (safely)

The guide is on GitHub, released under CC BY 4.0 (MIT for code snippets). Read it, fork it, adapt it, contribute back.

→ github.com/Cloudgeni-ai/infrastructure-agents-guide

If you want a platform that gives you these architectural layers out of the box — sandboxing, credential management, policy guardrails, observability — so your team can adopt AI safely without building all of it from scratch, that's what Cloudgeni is. But either way — whether you're wrapping your first safety layer around an existing tool or adding your tenth MCP server to a mature setup — build it on solid architecture. The industry will thank you later.

Have questions about the guide, or a pattern you think is missing? Open an issue or start a discussion on the repo. We're here for it.