The Definitive Guide to Importing Your Cloud Resources into IaC

By Davlet Dzhakishev

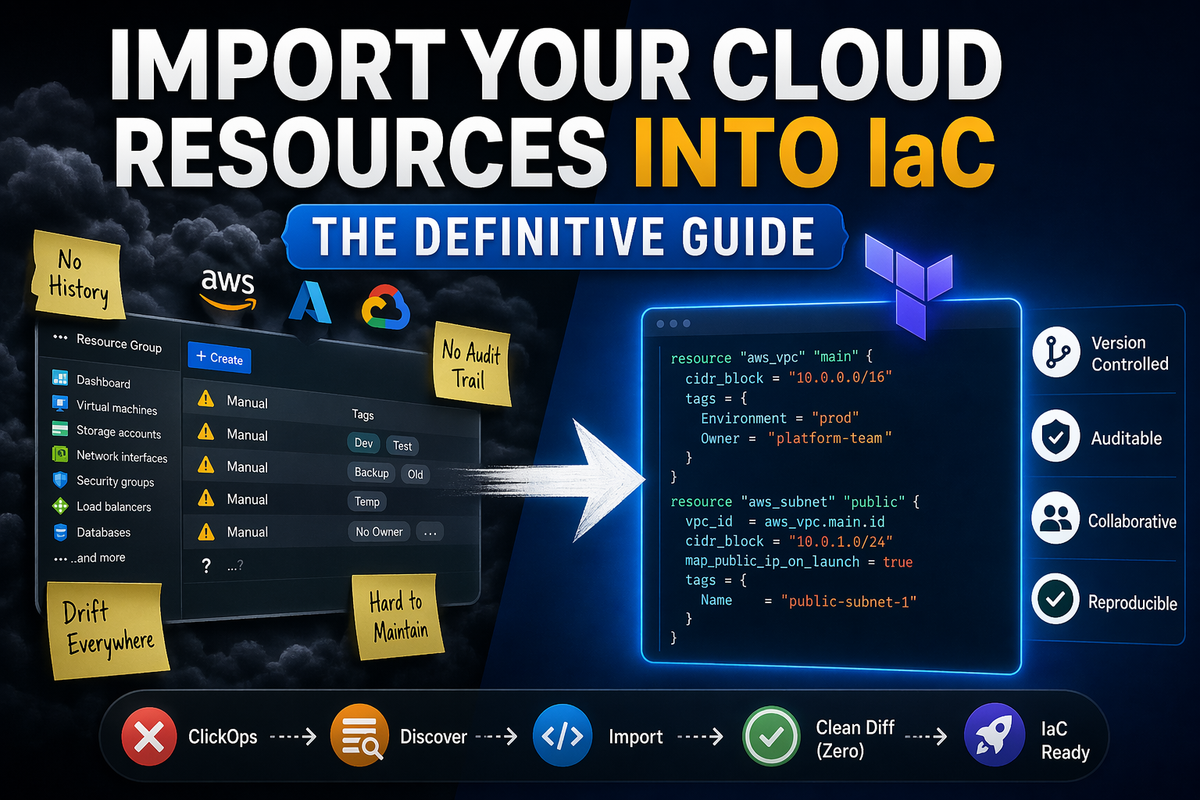

Most teams I talk to are in the same situation. Years of cloud infrastructure built the fast way — clicking through the portal, running one-off CLI commands, bash scripts copied from Stack Overflow at 2am. It works. Things are running. Nobody's touched it since.

And now someone wants it in Terraform

This is about how to actually do that. Every approach, ranked from the one that will waste your week to the one that just finishes the job.

But first — why this matters enough to bother.

IaC is not a nice-to-have

ClickOps is fast. I know. I did it too, early on in every company I worked at. A few clicks in AWS, GCP or Azure got the job done and I didn't think much about it.

The bill comes later.

Auditors ask for a history of who changed what. "I did it in the portal" is not an answer. Git history is. Without IaC you have nothing to show them, and the conversation gets uncomfortable fast.

Security is the same story. Resources created manually almost never go through the same scrutiny as code. No pull request, no review. That storage account with public blob access went in on a Friday afternoon and nobody caught it — because there was no process to catch it.

Access control gets worse over time. When infrastructure isn't in code, the only way to understand or change it is to have console access to it. So people accumulate permissions. Broader than they need. Because the alternative is waiting on someone else to make a change.

Documentation is the one that always surprises people. The VNet with the weird CIDR range — nobody knows why it's like that. The person who built it left eighteen months ago. With IaC, the code is the documentation. Without it, you're just hoping someone remembers.

And if something actually goes down and you need to rebuild from scratch — how long does that take? With IaC you run a pipeline. Without it you're reconstructing from memory, screenshots, and whatever's still in the runbook someone wrote in 2021.

None of this is new. The arguments aren't controversial. The problem isn't convincing people that IaC matters — it's getting from an existing cloud setup into IaC without breaking anything that's live in production.

That's the part nobody writes a good guide about. So here's one.

The options, worst to best

Terraformer — don't

Terraformer is a "reverse Terraform" CLI that generates .tf files and a tfstate from existing infrastructure. It lives in the GoogleCloudPlatform GitHub org but its own README notes it was created by Waze SRE — not an official Google product. More importantly: it was officially deprecated and archived on March 16, 2026. Read-only. No more updates, no more fixes.

Provider versions in the output are already outdated. You'll spend time fighting version constraints before you even look at what it generated.

And what it generates is rough. Hardcoded IDs everywhere instead of resource references. No modules. Everything dumped flat into a folder with generic names. generated/aws/ec2_instance/instance.tf. You get a wall of attributes with no structure — nothing that resembles how an engineer would actually write Terraform.

Coverage is also inconsistent. Some resources come out fine. Others are missing attributes, have wrong values, or fail entirely. You won't know which ones until you run terraform plan and stare at a diff that's longer than the actual configuration.

For most cloud setups — anything with real complexity — the time spent cleaning up Terraformer output is longer than writing the resource definitions yourself. The only scenario where it might still make sense is a flat setup with a handful of well-supported resources. Anything beyond that and you're fighting the tool instead of solving the problem.

terraform import — workable, but you're doing most of it yourself

This is how most teams do it. It works. It's slow.

The loop goes like this: write a resource block in HCL that matches what you're importing, run terraform import <address> <id> to pull it into state, run terraform plan to see the diff, then fix your code until the diff is gone. Then do it again for the next resource.

For ten resources, fine. For a real environment with hundreds of them, this is weeks of work.

Terraform 1.5 improved the situation. The new import {} block lets you declare the import in code — reviewable, version-controlled — and pair it with terraform plan -generate-config-out=generated.tf to get a starting point for the HCL. The generated code still needs cleanup, but it's a better starting point than nothing.

hcl

import {

to = azurerm_storage_account.main

id = "/subscriptions/.../storageAccounts/mystorageaccount"

}The real pain is drift. Your infrastructure has years of manual changes that won't show up in any generated output. Tags added through the portal. Security rules adjusted without a pipeline. Default values that Terraform thinks it owns. You run terraform plan, get a diff, fix it, run plan again, find another diff. The reconciliation loop is where the actual work lives, and it keeps going longer than you expect.

AI tools help here. You can paste a terraform plan output into an LLM and ask it to fix your HCL. You can ask it to generate resource blocks from API output. It speeds things up. But you're still driving — catching the mistakes, validating each resource, making the judgment calls.

Modules are the other problem nobody warns you about. The imported or generated code comes out flat — one resource per file, no structure, no reuse. Organizing that into proper modules that fit your existing patterns is a separate project stacked on top of the import project. It's not insurmountable, but it adds real time.

If your infrastructure is small to medium, this is a solid approach. If it's large — multiple accounts, multiple regions, years of accumulated changes — it's a significant undertaking that tends to drag on and never feel quite finished.

Notable commercial tools — closer, but not there yet

Two platforms are worth knowing about before we get to what we built.

Firefly scans your cloud continuously, spots unmanaged resources, and generates IaC code for them — not just Terraform and Pulumi, but also CloudFormation, Bicep, ARM, Ansible, Crossplane, CDK, Helm, and more. It handles cross-resource dependencies, detects drift, generates the remediation code automatically, and can raise the PR for you. Their newer Thinkerbell agents claim to go further and remediate autonomously. You still approve what goes to production, but you're not writing the fix yourself. Firefly is a broad cloud governance platform — codification is one pillar among several.

ControlMonkey focuses tightly on the import problem. It groups related resources into logical stacks using what they call Smart Stacking, generates both the HCL and a pre-built state file (so you skip the manual terraform import step entirely), and wires it into a GitOps CI/CD pipeline for ongoing management. Their case studies report 75-80% reduction in migration time, and they support both AWS and Azure. The distinction from Cloudgeni: they generate validated code at a point in time and hand it into your workflow. The ongoing reconciliation loop — what happens when the first plan still isn't clean — is still on you.

Spacelift, env0, and Terraform Cloud are execution and orchestration platforms — they can run plans, enforce policies, and detect drift, but none of them reverse-engineer unmanaged infrastructure into IaC for you. They're useful once you have code to run.

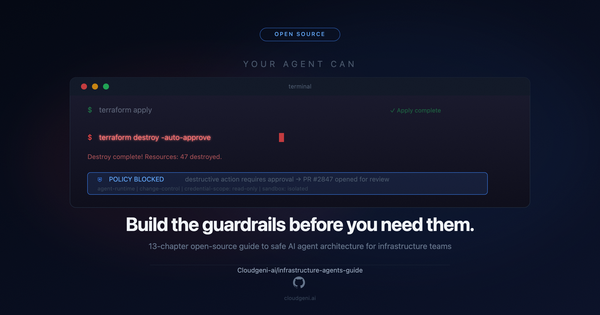

Cloudgeni — the one that actually closes the loop

Everything above involves a loop. Import → plan → diff → fix → plan → diff → fix. The process ends when you decide it's good enough. Which is never quite the same as actually done.

Cloudgeni is what we built to close that loop without someone sitting in it.

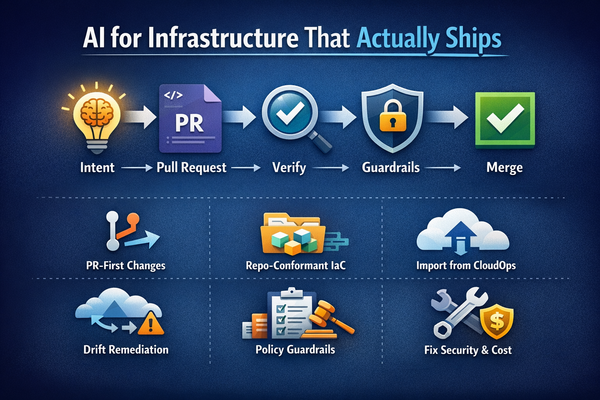

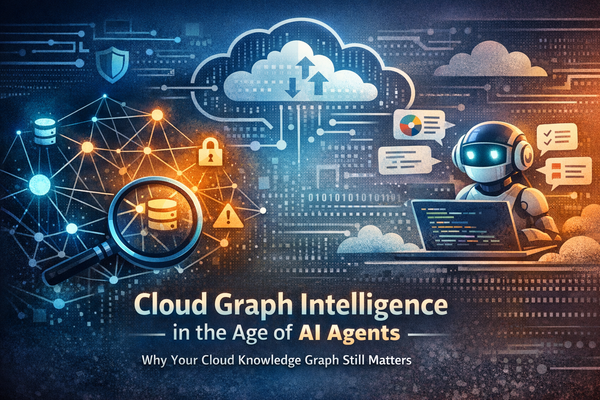

You pick what to import. A single resource, a set of resources, an entire resource group, a whole subscription. The scope is yours to define. Then Cloudgeni takes over — it generates Terraform code with actual structure. If you have existing module patterns, it follows them. If you don't, it creates modules that make architectural sense for what it found. References instead of hardcoded IDs. Real code, not a dump.

And if you don't have a CI/CD pipeline yet — which is most people starting this journey from scratch — Cloudgeni sets that up too. You're not expected to have the plumbing in place before it can help you. It builds the pipeline, then uses it to validate the import against your actual provider config and backend. The goal is to drive toward a no-op plan — code that matches reality — rather than handing you a starting point and walking away.

Not "close enough." Not "mostly clean with a few expected differences." Zero. That's what a correct import looks like, and that's the bar Cloudgeni holds itself to.

For teams managing enterprise cloud environments — multiple accounts, years of accumulated ClickOps, infrastructure that nobody fully understands anymore — this is the only approach that actually completes. The alternative is months of manual reconciliation, done inconsistently, with gaps that show up later when you least want them to.

A full walkthrough is in this 1 minute video - https://youtu.be/IqFJ6qtn6W4

What about Bicep, Pulumi, and everything else?

This whole guide has been Terraform-focused because that's where most teams are. But the problem — existing cloud resources outside any IaC tool — exists regardless of what you've standardized on.

Bicep: Azure's portal has a built-in ARM template export for any resource group. From there, az bicep decompile converts it to Bicep. The output is rough — hardcoded everywhere, no parameterization — but it's a real starting point for Azure-native teams who don't want to go near Terraform. Microsoft's aztfexport does the same thing but targets Terraform instead.

Pulumi: Probably the best native import experience of any IaC tool right now. pulumi import updates state and generates code in your target language — TypeScript, Python, Go, C#. You can script bulk imports via a JSON resource list. The output needs cleanup, but it's real code in a real language, which makes the cleanup less painful.

CloudFormation: AWS built an IaC Generator directly into CloudFormation — it scans your account, you pick the resources, it generates a template. CDK Migrate can then turn that template into a CDK app if you'd rather work in code than YAML. For AWS-native shops that aren't on Terraform, this is the most integrated path available.

OpenTofu: Same import mechanics as Terraform. The import {} block, the CLI command, the same drift reconciliation loop. The difference is licensing — MPL 2.0 vs Terraform's BSL 1.1 — not the import workflow itself.

Cloudgeni works across all of these. Terraform, Bicep, Pulumi, OpenTofu — it adapts to your stack rather than asking you to change it.

Which approach fits

| Situation | Go with |

|---|---|

| Under 20 resources, relatively simple | terraform import + manual cleanup |

| 20–100 resources, no existing module structure | import {} blocks + AI-assisted HCL |

| 100+ resources, any cloud | Cloudgeni |

| Azure-only, Bicep preferred | ARM export → Bicep decompile → cleanup |

| Pulumi shop | pulumi import with a JSON bulk resource file |

| AWS-native, CloudFormation | AWS IaC Generator → CDK Migrate |

Every month of ClickOps is another month of infrastructure that lives outside version control, outside review, outside any audit trail. The approaches exist. Pick the one that matches your scale.

Davlet Dzhakishev is CEO/CTO & Co-Founder of Cloudgeni, an agentic cloud operations platform. Former Software Engineer at Microsoft.