AI for Infrastructure that Does Not Break Production

If you’ve tried “AI writes Terraform,” you already know the truth: generating HCL is not the hard part.

The hard part is everything that happens after the code exists. Does it match your modules and conventions? Does it widen IAM in subtle ways? Will it replace critical resources? Will it still be correct after someone makes a “quick fix” in the console?

So this isn’t an article about writing IaC faster. It’s about using AI for infrastructure in a way that holds up in production.

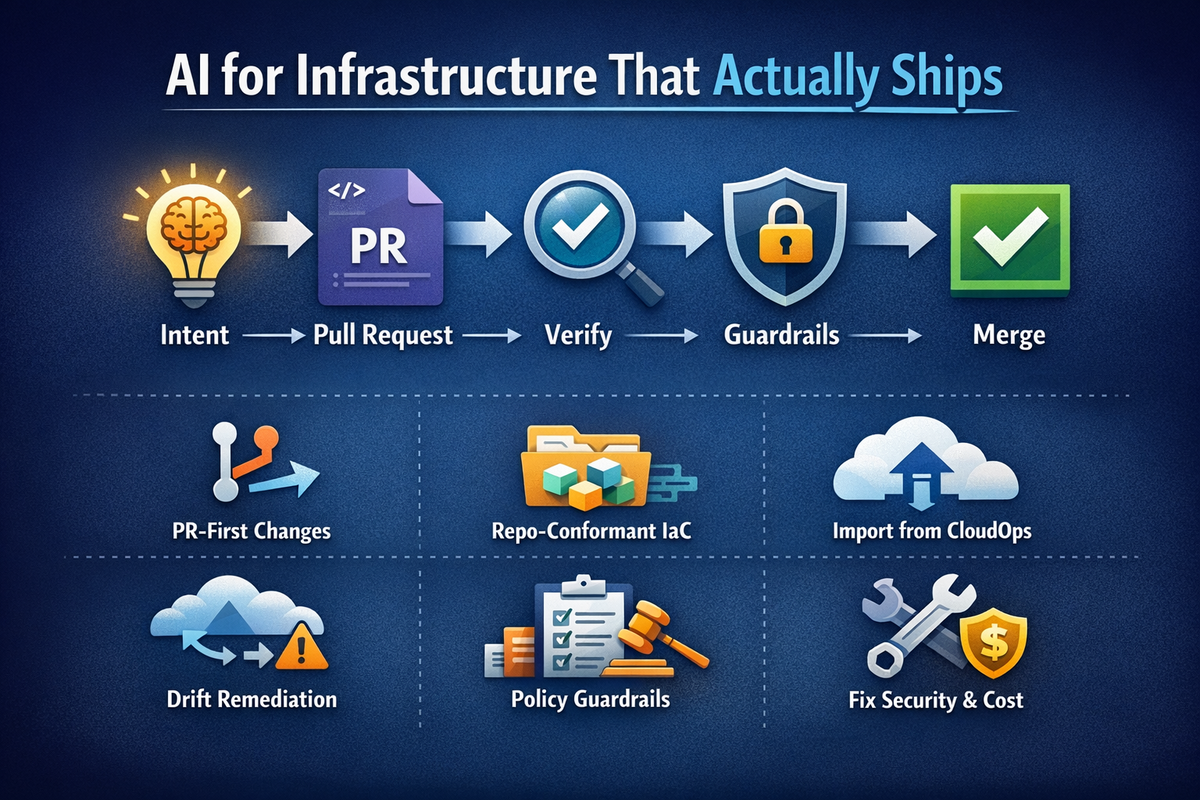

The only pattern I’ve seen that scales safely is simple:

Intent - Pull Request - Verification - Guardrails - Merge

Cloudgeni is built around this constraint. AI proposes changes, but your existing engineering controls decide what ships.

Why most “AI for Terraform” breaks down

Most failures aren’t syntax errors. They’re context errors.

Generic AI output tends to be “reasonable” but not “yours.” It doesn’t automatically understand your module boundaries, your naming and tagging rules, your provider constraints, or your internal risk tolerance. And when it’s under-specified, it often “solves” the problem by being permissive: broader IAM, public exposure, overly large blast radius.

That’s why the goal can’t be “generate code.” The goal is “generate a change you can approve.”

Two types of drift matter here, because they get confused all the time:

Policy drift is when a proposed change violates your rules (security, governance, internal standards). Configuration drift is when the real cloud diverges from what your Infrastructure as Code says.

Production-safe AI workflows should address both.

Production-sade AI workflows

Intent to PR

If AI is only producing snippets in a chat window, it stays a personal productivity hack. The moment you want a team workflow, you need a pull request boundary.

PRs are where reality lives: reviewers, CI checks, history, rollback. It’s also where you can force the two questions that matter: “What will change?” and “Are we okay with it?”

A good AI workflow starts with intent and ends with a diff that can be reviewed and tested.

Repo-conformant changes

Most platform teams don’t lack Terraform. They lack consistency.

AI becomes useful when you make it operate inside your repo conventions instead of fighting them. That means it should respect module patterns, folder layout, provider versions, naming and tagging rules, and the boundaries between platform and application teams.

In practice, this is where “AI for infrastructure” stops being flashy and starts being valuable: it helps you keep standards without requiring every engineer to memorize them.

ClickOps to IaC import (turn console into code)

Every organization has ClickOps, even the ones that insist they don’t. It’s not moral failure; it’s what happens when teams move fast and outages are expensive.

The problem is that unmanaged resources become unknown risk and unknown cost. The fastest way to reduce both is to bring reality under Infrastructure as Code.

Import is exactly the kind of work people avoid because it’s tedious and detail-heavy. That’s why AI can help: it can accelerate discovery, propose how to group resources into sane modules, generate import-ready code, and package it into PRs that reviewers can actually understand.

If you want quick ROI, this is one of the best places to start.

Drift

Most teams either don’t measure configuration drift consistently, or they detect it and then never fix it because remediation becomes toil. The result is predictable: IaC stops being trusted, and people return to the console.

The workflow that works is closed-loop drift handling: detect drift on a schedule, summarize blast radius in human language, and propose remediation as a PR.

You decide the direction based on your operating model. Sometimes the “real” environment is the intended state and you update code to match. Other times code is the intended state and you revert out-of-band changes. The point is not ideology. The point is regaining control.

Policy restrictions at PR

Guardrails that aren’t enforced become documentation. If you want AI to be safe at scale, your policies need to run on every PR, and ideally they should evaluate the effective change, not just static text.

This is where platform teams get serious: you make certain classes of changes simply unshippable. Public exposure by default. Missing required tags. Dangerous security group rules. “Delete/replace” actions without explicit approval. Region allowlists. Encryption requirements.

AI can help here too, but not by “deciding.” Its job is to explain violations clearly and propose minimal fixes that pass policy without inflating blast radius.

Fix PRs for security + cost findings

The reason security and cost backlogs grow is that the output is usually tickets, not patches.

The production-safe AI workflow is: take a finding (security or cost), propose the safe remediation, show the expected impact, and open a PR that passes the same checks as any other infra change.

For cost, be disciplined. Start with low-risk wins (obvious unused resources, clear overprovisioning with safety margin) before touching anything that affects latency, availability, or throughput. AI can suggest plenty of “savings.” Your job is to only ship the ones you can defend.

A small start plan

A good starting sequence could look like this: pick one repo and one environment. Turn on drift detection weekly. Add a small set of guardrails you’re actually willing to enforce. Then use AI for PR-sized changes only.

If you can’t measure outcomes, you won’t keep momentum. Track a few hard numbers: reduced drift over time, review cycle time, incident rate, and cost deltas where you’re confident.

A small prompt pack

Use prompts that force bounded changes and verification. For example:

“Propose the smallest Terraform change to enable X. Use our existing modules, naming, and tags. Output a PR.”

“Detect drift in prod, summarize blast radius, and open a PR to reconcile with minimal risk.”

“Run policy checks on this change and propose minimal fixes for critical violations (do not widen IAM).”

FAQ

Is AI safe for Terraform and Infrastructure as Code?

Not by default. It becomes safe when AI output is constrained to PRs, verified by plan/validation, and gated by policies you enforce consistently.

What’s the biggest mistake teams make with AI for infrastructure?

Letting AI output bypass the PR boundary, or treating “looks right” as verification.

Where should I start if our infrastructure is messy?

ClickOps-to-IaC import and drift. Bring reality under code, then keep it from drifting again.

CLOSING

AI won’t replace infrastructure engineering. It will compress the boring parts and amplify your standards if you force it to operate inside the controls you already trust.

If you want faster delivery without higher risk, don’t chase “autonomous changes.” Build PR-first workflows where intent becomes a diff, verification reduces uncertainty, and guardrails prevent the changes you never want in production.